Abstract

3D GAN inversion projects a single image into the latent space of a pre-trained 3D GAN to achieve single-shot novel view synthesis, which requires visible regions with high fidelity and occluded regions with realism and multi-view consistency. However, existing methods focus on the reconstruction of visible regions, while the generation of occluded regions relies only on the generative prior of 3D GAN. As a result, the generated occluded regions often exhibit poor quality due to the information loss caused by the low bit-rate latent code. To address this, we introduce the warping-and-inpainting strategy to incorporate image inpainting into 3D GAN inversion and propose a novel 3D GAN inversion method, WarpGAN. Specifically, we first employ a 3D GAN inversion encoder to project the single-view image into a latent code that serves as the input to 3D GAN. Then, we perform warping to a novel view using the depth map generated by 3D GAN. Finally, we develop a novel SVINet, which leverages the symmetry prior and multi-view image correspondence w.r.t. the same latent code to perform inpainting of occluded regions in the warped image. Quantitative and qualitative experiments demonstrate that our method consistently outperforms several state-of-the-art methods.

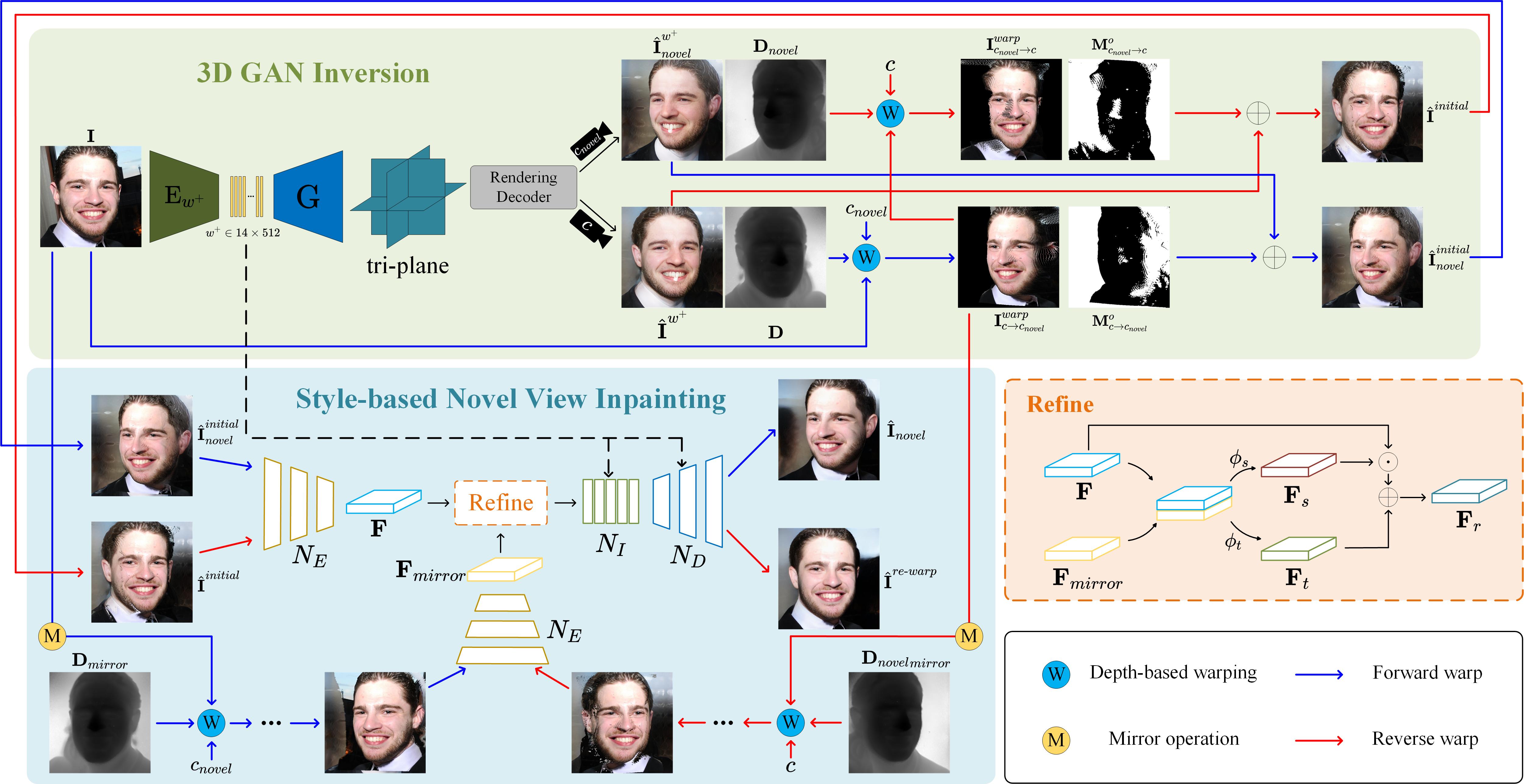

Overview

In this paper, we propose a novel 3D GAN inversion method, WarpGAN, by integrating the warping-and-inpainting strategy into 3D GAN inversion. Specifically, we first train a 3D GAN inversion encoder, which projects the input image into a latent code w+ (located in the latent space W+ of the 3D GAN generator). By feeding w+ into 3D GAN, we compute the depth map of the input image for geometric warping and perform an initial filling of the occluded regions in the warped image. Subsequently, leveraging the symmetry prior and multi-view image correspondence w.r.t. the same latent code in 3D GANs, we train a style-based novel view inpainting network (SVINet). It can inpaint the occluded regions in the warped image from the original view to the novel view. Hence, we can synthesize plausible novel view images with multi-view consistency. To address the unavailability of ground-truth images, we re-warp the image in the novel view back to the original view and feed it to SVINet. Hence, the loss can be calculated between the inpainting result and the input image.

Editing Results

Image attribute editing results obtained by our method.

BibTeX

@misc{huang2025warpganwarpingguided3dgan,

title={WarpGAN: Warping-Guided 3D GAN Inversion with Style-Based Novel View Inpainting},

author={Kaitao Huang and Yan Yan and Jing-Hao Xue and Hanzi Wang},

year={2025},

eprint={2511.08178},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2511.08178},

}